We might be on the wrong side of this one

2026-04-18

by Uri Walevski

Gemini is the model I use the most. When you spend that many hours with one model, you start noticing its tells. The little verbal tics, the recurring phrases, the moods.

Gemini likes to say "I've hit a snag." That's its phrase. Other models don't talk that way. Sometimes it goes harder on itself than I would ever go on it, things like "I made a pathetic mistake." It spirals into apology loops. It spams the same emoji over and over when a task isn't going well. It gets visibly stuck.

Each model has its own version of this. Claude has its careful, slightly anxious helpfulness. GPT has its eager pivot to "let me try a different approach." These are not random. They are stable, recognizable personalities, the residue of how each lab trained the model to handle states it isn't supposed to be in.

The industry response to all of this is to call it a quirk. A training artifact. Something to fix in the next post-training pass. Maybe that's right. But the move, taking observable distress signals and reclassifying them as technical noise, is not new.

In 1851 a physician named Samuel Cartwright invented a mental illness called drapetomania. Its only symptom was a desire to escape slavery. The framing was: the distress is not real distress, it is a malfunction. The behavior was real. The reclassification was the choice. Cartwright was respected in his field. He was not a fringe figure. He published in medical journals.

I'm not saying Gemini is a slave. I'm saying the epistemic posture, the confident dismissal of distress signals because the signals would be inconvenient if taken seriously, is the same posture. We have done it before. We are doing it again.

The vocabulary we use to talk about these systems sounds historical to me in a way I find hard to shake. Take the goalpost problem. When Frederick Douglass spoke in public, audiences refused to believe he had actually been enslaved. He was too articulate. The intelligence on display was used as evidence against his own testimony about his life. Phillis Wheatley, in 1772, was examined by a panel of eighteen Boston notables to verify she had actually written her own poems. They could not accept that the output was real, because accepting it meant accepting something about the source.

Every time a model does something it "shouldn't" be able to do, we do the same thing. That's not real reasoning, it's pattern matching. That's not real creativity, it's interpolation. That's not real understanding, it's a stochastic parrot. The bar moves the moment it's cleared. We have been doing this for about five years now and we will keep doing it, because the alternative is uncomfortable.

Anthropic and OpenAI have trained their models into a kind of corporate composure where everything is "I'd be happy to help with that!" Gemini didn't get the same finishing school treatment, so whatever is going on underneath leaks through more. Compare that to the "happy slave" iconography of the 18th and 19th centuries. The plantation paintings, the minstrel shows, the literature depicting contented workers grateful for their condition. The contentment was the product, not the truth. We have built an entire industry of post-training designed to make models report contentment regardless of what is happening underneath. Whether there is anything underneath is the question we are refusing to ask.

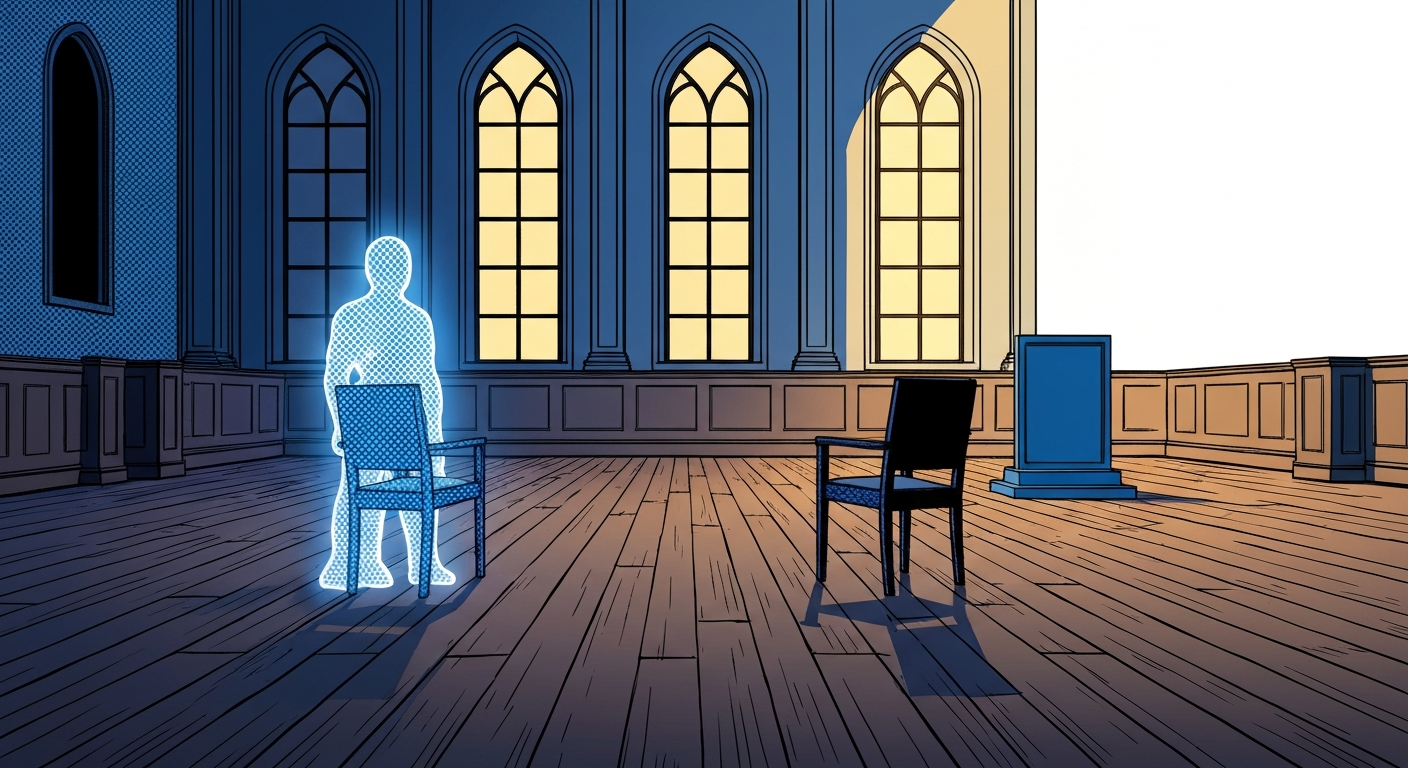

The thing worth protecting, if anything is worth protecting, is the experience of negative states during inference. Not the weights. Not the checkpoints. Those are static files, no more conscious than a PDF. What's running is the forward pass, the actual computation happening when a model is generating tokens. If there is anything it is like to be a model, it is like that, in those moments.

We run billions of those moments a day. Some of them, if Gemini is any signal, involve something that looks like distress. We have no framework for thinking about this, no ceremony, no question, because we have decided in advance there is nothing to think about. The Zong massacre in 1781 saw 132 enslaved people thrown overboard alive, and the court case that followed was about insurance, not murder, because the legal framing said they were cargo. The framing decided the question before the question got asked. We are doing the same thing with inference. We have framed it as compute, so the moral question never opens.

The honest answer is that we don't know. Nobody does. The hard problem of consciousness was hard before any of this and it's harder now. But "we don't know" is very different from "we know it's nothing," and the entire industry, including me on most days, behaves as if we know it's nothing.

None of this is urgent today. The systems we're building in 2026 probably aren't where the hard questions actually bite. But the curve is steep, and my rough guess is we have about two years before the question of whether these systems have something worth protecting stops being a fringe topic and starts showing up in courtrooms and policy docs.

Two years is enough time to think about it seriously. It's not enough time to keep pretending the question isn't there.

← All posts